The small tech neighbourhood

Building and supporting sustainable small tech on a human scale

We often hear of small tech as a more ethical and scalable alternative to US-style big tech and the unsustainable cycles of pump-and-dump fueled by the VC economic model.

But, depending on who you ask, you may get different definitions of “small tech”.

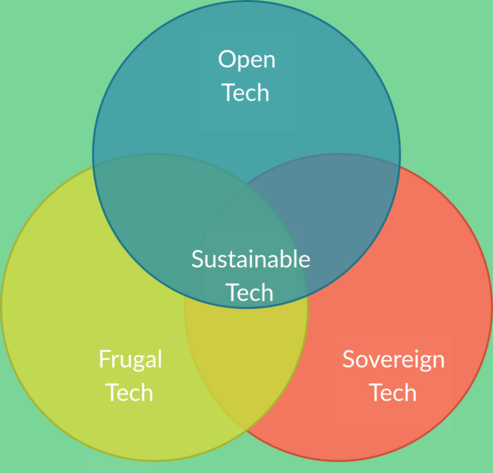

This article goes through the several possible definition of small/open tech, their pros and cons, and proposes a “sustainable open neighbourhood” approach at the intersection of those models.

Frugal tech

A generally agreeable definition of small tech is that of frugal tech.

Frugal engineering isn't something particularly new. It's a principle that's been applied for decades in the design and engineering of technological products.

Often aimed at developing countries, its aim is to reduce complexity and reduce costs by stripping all non-essential features.

Think of Musk's dreams of the “everything app“, modeled after the dystopian Chinese tech.

Frugal tech is a step in the opposite direction. Instead of all-encompassing products that mostly benefit their makers (by providing them with disproportionate leverage over people and whole countries, by funnelling financial, behavioural and commercial data from both users and other businesses into the same entity, and by turning it into a de facto monopoly that is too big to fail and has as much power as a whole country), frugal tech focuses on the “one tool for one purpose” approach – a direct corollary of the UNIX philosophy.

The problem

Frugal tech addresses some of the core concerns with traditional big tech.

Smaller tools that do only one job and do it well solve the “all eggs in one basket” problem that makes big tech an infectious disease that gradually swallows larger and larger portions of the industry, of the market and of society as a whole.

Instead of selling you an all-in-one Swiss knife, frugal tech sells you a screwdriver, a wrench, a hammer, a knife and a corkscrew.

However, nothing prevents a particular screwdriver maker from growing into a monopoly in its own niche.

Or from turning into a subscription-based service that rather than selling you a screwdriver that you own, forcing you to rent its tools without actually owning them – a subscription whose cost you need to pay every month whether or not you use the screwdriver.

Frugal tech guarantees a separation of domains reflected into a separation of products which makes it harder for the whole industry to collapse into a black hole of enshittification. But it doesn't guarantee that individual niches or products will be immune from such collapse.

Plus, it doesn't say much about the openness of the proposed solutions, and what defense mechanisms users have if one of their tools goes rogue (other than reinventing its wheel).

The sovereign stack

Another popular option nowadays, especially now that the United States have gone rogue with their little fascist experiment, is the “sovereign tech stack”.

This is a topic that has been hovering among European policymakers for a while. It's embodied by Macron's idea for “European champions” and echoed by Draghi's report on European competitiveness.

The idea is simple: relying solely on US big tech is a liability. Funnelling data about your own citizens to an unregulated and increasingly hostile market without providing them with alternatives is a liability. And this idea has been turbo-charged now that the US is officially a fascist cesspit that has lost the trust of its allies, which are currently in the middle of a frantic process of diversification, divesting and boycotting.

The problem

The problem with the “European champions” approach is the same as the “American champions” approach.

It wouldn't have a problem with the level of concentration of power of the likes of Google or Microsoft, or even Palantir, if those companies were European.

It doesn't have a problem with companies like Spotify or Booking.com, and their concentration of power in their respective industries, because those are European companies. On the contrary, those companies would fit the definition of “European champions” that could compete with American (or Chinese) tech.

When those reports talk about “competitiveness” they usually don't mean healthy competition within the European market. They mostly mean “competitiveness” against the tech giants of other industrial superpowers. If the market in Europe remains concentrated around those “champions” and entry barriers remain high for everyone, so let it be.

According to this degenerate libertarian doctrine, picking the winners rather than the willing is a fair price to pay for international significance and relevance.

This approach is the poster child of the neoliberal ideology that still infects the mainstream economic doctrine. A degenerate vision for capitalism that dumps what its founding fathers themselves saw as an indispensable mechanism for fairness, self-preservation and self-legitimization (openness and competition), while fostering its drawbacks (concentration of power and formation of monopolies and cartels) as the final objective for a definition of “competitiveness” that only applies to external markets.

It may work in containing the problem of globalized big tech, but it simply turns into a problem of local giants.

Some may argue that the EU also envisions a strong role for open-source solutions in its intentions. But I would object that such a vision is a pure coincidence, due to the fact that solid open-source solutions are already out there and they can be a better alternative to reinventing wheels. It's the free beer on the bar counter that it'd be a shame not to grab. If the EU really believed in open-source then it wouldn't have limited itself to investing just a couple of millions through the NGI initiative, while America and China invest trillions in their tech. And it wouldn't need to submit a “call for evidence” for the impact and benefits of open-source: it would already know that it already constitutes the backbone of its technological stack, and it would already have put its wallet where its mouth is.

The open stack

The drawbacks of both the frugal tech and the sovereign tech approaches eventually boil down to the fact that neither makes free and open-source solutions a core part and necessary condition of their solutions, and neither has built-in mechanisms to prevent the rise of (local) monopolies.

Any solution that isn't truly open is vulnerable to the same industry decay issues that currently affect big tech – market concentration, monopoly and increasingly high entry barriers for competitors.

Open protocols are the basis for any sustainable technology.

The Internet as we know it would have never been born if AT&T and a bunch of other private companies had pushed for competing closed and mutually incompatible protocols rather than TCP/IP.

The Web as we know it would have never been born if AOL and Microsoft had pushed for competing closed and mutually incompatible protocols rather than eventually adopting the CERN's open HTTP implementation. We would have ended up with fenced browsers that could only render a subset of the websites. The “Works with Internet Explorer” era would have lasted well into our days – and it would have gotten worse and worse.

And, in case you didn't notice, big tech's push towards closed mobile apps rather than Web-based solutions accessible from any browser is exactly an attempt to undo that progress.

Each “XYZ works better in the app” banner you see on a Website is a clear statement. It says “yes, with modern Web standards we could definitely provide you a good service, a good PWA (Progressive Web App) could definitely do the job, but we choose not to, and we'll make it increasingly hard for you to use our product in a browser. Because being sandboxed in a browser based on open protocols limits our ability to scoop up and sell data about you, it lowers entry barriers to competitors who could reverse engineer our frontend or API layer, and it limits the control we have over which devices and operating systems can run our service”.

The right thing to do with these products as services is simple: BOYCOTT. Whenever possible, boycott them, uninstall them and delete your accounts. Those who build or sell you those products are morally bankrupt, they are enemies of everything that made the Web the great technology that it is today, and they are desperately trying to shift the clock back to the era of stand-alone apps after thriving thanks to the Web itself.

Competition should exist around user-facing products, not around protocols. Just like competition between train companies usually manifests through different offers of train services, connections, schedules, pricing models or additional services – not by building different and mutually incompatible railways and stations.

Open code is the next foundation for any sustainable products. Avoiding solutions whose source code is not publicly available should be a moral obligation.

Not only, you should also avoid solutions that are technically open but impose custom CLAs (Contributor License Agreements) upon their contributors.

The reason is simple: those CLAs are increasingly often just a way to bypass the open-source licenses that those projects are supposed to be released under.

If a supposedly “open” product goes rogue at some point and tries to pull the rug under everyone's feet, with a license change and/or a codebase visibility change, then contributors are usually allowed to pull the rug back.

Strict open licenses like GPL-3 and AGPL-3 make the “pull the rug” move difficult:

”...you may not impose any further restrictions on the exercise of the rights granted or affirmed under this License.”

Relicencing or closing the source code, under such terms, is equivalent to copyright infringement.

Previous contributors hold their copyright over their own code, and have the right to revoke their license in case of unilateral relicensing.

More liberal licenses, like MIT or BSD-3, on the other hand, are not as strict. But in case of relicensing the project under the former license must remain available, and forks are always possible.

Open-source is not only an ethical stance: it's a guarantee that the product that you use won't decay when a new VP or CEO is hired, or when shareholders demand more aggressive “monetization/profitability plans”.

Open APIs are also a necessary condition for sustainable tech products, just like a good electronic chip is expected to come with a well-documented data sheet to integrate it into wider projects.

It is not, however, a sufficient condition.

Increasingly often over the past decade commercial software solutions have been providing an API to interact with their systems, and therefore started calling their products “open”.

Having a public interface to communicate with a system isn't a guarantee of openness, for the same reason why a centralized IBM mainframe isn't “open” nor decentralized just because it provides a SUBMIT command.

The problem

FOSS has, of course, a free beer on the bar counter, a.k.a. “tragedy of commons”, issue.

What you don't pay for, in a way or another, is taken for granted.

Burnout among open-source developers is extremely common.

Github projects with deprecation or abandonment warnings are increasingly common. And nobody likes to rely on a project that may die tomorrow because of a burned out maintainer.

The stance taken by many FOSS developers (”if you want to keep the project alive, or if you want feature X, just fork it and implement it yourself”) is as dumb, elitist and unscalable as it sounds. FOSS contribution take a lot of my time. I have contributed over time to more than 1M lines of code on Github projects (which doesn't count all the code I've contributed on other forges). Yet even an experienced developer can't realistically fork, modify and maintain each single project that gets abandoned or gets a wontfix issue. Some level of delegation is indispensable in any functioning industry.

Several alternatives have been proposed over time to make FOSS development more sustainable and attractive. Among them:

Donations/crowdfunding: probably the most straightforward approach, yet the one with the lowest returns for developers. There are dashboards out there that track sponsorships across Github projects, and they provide a clear idea of how much the average FOSS developer makes. “Superstar” developers like Evan You (author of the Vue.js framework, among the other things) have more than 100k followers on Github, yet they make less than $1000/month from sponsors. Large projects like Neovim raise less than $2500/month – and it has more than 100 contributors with more than 25 commits. Imagine running a medium-sized companies with 100 developers with a budget of $2500/month for the whole engineering department.

Corporate funding: this is common for very large projects (like the Linux kernel, or the Apache foundation projects, or Kafka) that are so critical to large companies that those companies are happy to fund their development. This is a very high bar to get though: even the richest companies on earth would be happy to just do an

npm installof your package if you're a small developer who built a small/medium JavaScript module, without even thanking you. Plus, large corporate funding to open projects usually comes with strings attached, and corporate influence over the priorities of the project.Dual licensing: this is very common for medium/large projects that are critical in business contexts. If those businesses won't fund you directly, then your product isn't open to them – but it'll stay open to any other private user. Common for products like MySQL, Redis (after its clumsy failed experiment with SSPL) and Elastic. This is a reasonable compromise that makes sure that those who use those products to make big profits also contribute back, while keeping the products free and open for everyone else. The problem is that these licensing changes usually come with headaches both for other downstream developers and end users. Strict licenses like SSPL usually are usually not considered free, therefore their usage often means that the whole underlying piece of software may no longer be considered free, which usually comes with nasty side effects – such as the removal of the package from official Linux distro repositories.

Service-based revenue models: think of Red Hat's trainings, consulting and specialized support. Or of a network service that is free and open for end-users who want to build it and run it on their machines, but funds its development by selling managed solutions to those who don't want to run the software on their own machines.

Public funding: this is perhaps the ideal outcome. Public money means public software, which means that public administrations should prefer open solutions over closed ones, usually imposed through active lobbying. And public institutions benefiting from public software should close the loop by funding public software. Which means serious funding. If Germany alone spend $482M a year just on Microsoft licenses, but then the EU as a whole barely allocates $27M to fund its open infrastructure through NGI, and FOSS funding even disappeared from the 2025 budget, replaced by a “call for evidence”, as if open-source developers had to continuously prove that what they build (and already runs most of the EU institutions) actually has a value and it's worth funding, then we have a problem. The impression we've got is that institutions like free software just like because they like to drink a free pint of beer, because it saves taxpayers money. And that's neither ethical nor sustainable.

The small tech neighbourhood appeal

In an ideal world where antitrust regulations still work, and sizeable public investments into the public good are still a thing, governments would act to break up monopolies before they grow too big too fail, they would impose strict regulations and hefty fines to the businesses that either abuse their users or the rules for a healthy free market, and they would make sure that developers that contribute to the public good get enough money to keep contributing to it. Contributing to the public good is a civic service, and therefore contributing to open-source code used by institutions and businesses should be a civic service. This is the literal bare minimum to keep the lights on and ensure that talented contributors are still sufficiently motivated to work on public software, and that such contributions aren't seen just as the occasional vocation of some privileged or socially active developers.

Unfortunately, we're not in that world yet. We're still in a world dominated by the neoliberal doctrine that everything should have a price tag, that it's not a government's job to have any opinion on industrial vision, and that uncontrolled concentration of power is an indicator of business success rather than a failure of the system.

But it doesn't mean that we, as citizens, are powerless.

When institutions and businesses fail, the fabric of society is usually held together by smaller communities that fill, on smaller scales, the gaps that should be traditionally filled by larger actors.

The models discussed in the previous paragraphs all have their pros and cons. But the most reliable and sustainable technology lies probably in their intersection. What you get in that intersection are products that are:

Frugal: easier to maintain for smaller teams of contributors because their scope is contained and their purpose clear. They don't try to embed AI features just because a product manager says that it's what investors want. They don't try to continuously expand their scope in order to take ground away from (potential or real) competitors. They don't have to keep pivoting every quarter because of spoiled shareholders expecting a continuous acceleration of the growth rate.

Sovereign: they don't create hard dependencies from other geopolitical actors that benefit from data and financial resources subtracted from your own local industries, in what is basically an act of digital colonialism. They foster the growth of your local ecosystems and create healthy internal flows of capital and talent that would otherwise relocate.

Open: their licenses are a guarantee against enshittification, and they are based on open protocols and APIs, which maximizes both healthy internal competition and the ability for others to build upon that work.

The local digital neighbourhood is perhaps the ideal embodiment of these principles.

It encourages a reality where there are as few intermediaries as possible between users and developers.

A reality where the author of your favourite blogging platform, photo editing app, library or search engine has a name, a surname and a direct contact, rather than being in a world of faceless corporations using AI chatbots as their primary interface with the outside world.

This has an obvious added value for end-users, but also for contributors.

A world where the users of our products are other people who provide us with invaluable direct feedback, and who are much more likely to donate to the project if they feel that they have a voice, is much better than a world where my thing may be automatically pulled into a big tech project through a thick network of dependencies, without me even knowing that my code is used there (and often even without the company knowing that).

It's the same difference between going to a McDonald's with a digital kiosk to place orders and a local pub where you can exchange a few words with the owner or other regular customers.

And I believe that self-hosting (or at least micro self-hosting) should also be an integral part of this solution.

The myth that self-hosting is an activity only for the super-tech savvy developer or sysadmin must go.

We've got computing power everywhere nowadays. A RaspberryPi is cheap and it can already run a lot of stuff.

A miniPC, or an old laptop or tablet, or even an old Android phone with Termux, nowadays can definitely double as a server.

Stuff like Searxng, Etherpad or WriteFreely can be easily self-hosted on a RaspberryPi, running it is as easy as pulling and running a Docker image, and once you run them you have your own search engine, or collaborative pastebin, or blogging platform. A big first step towards sovereignty.

Sure, it may require a bit of learning curve – but nothing as dramatic as 10-15 years ago. Sure, it may take some effort to learn a couple of things, migrate stuff or create a new community.

Just like it takes a bit of effort for someone who has eaten only McDonald's meals for the past 10-15 years to get used to the community at the local pub or buy a few groceries at the local supermarket.

But we all know that it's the right thing to do. We all know that a world where everyone keeps eating McDonald's everything, and thinking that that's the only viable solution, is neither a sustainable nor a healthy world.