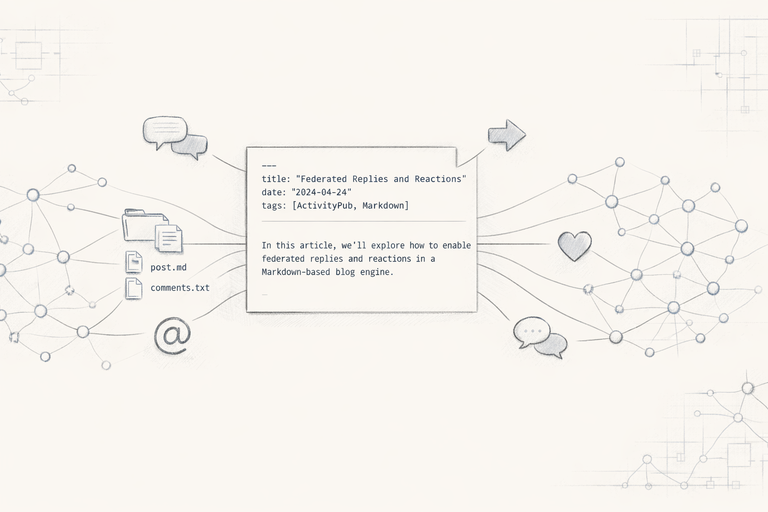

Federated Replies and Reactions in Madblog

Mar 15, 2026

Engage with the Web from plain text files

Madblog: A Markdown Folder That Federates Everywhere

Mar 10, 2026

A lightweight blogging engine based on text files, with native Fediverse and IndieWeb support

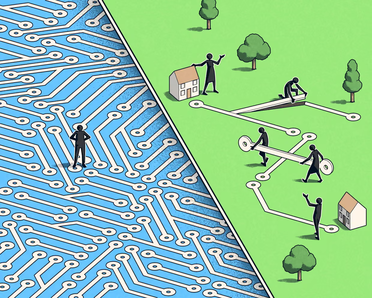

The small tech neighbourhood

Feb 23, 2026

Building and supporting sustainable small tech on a human scale

Webmentions with batteries included

Feb 11, 2026

A zero-cost library to integrate Webmentions in your website

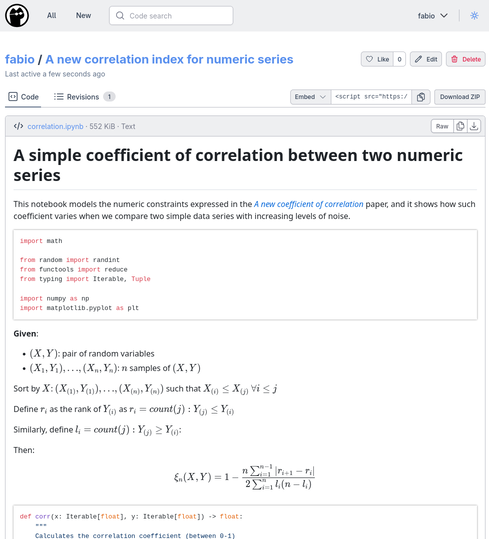

Render your Jupyter notebooks in OpenGist

Aug 03, 2025

No more Github links and no more sharing Jupyter tokens!

GPSTracker - A self-hosted alternative to Google Maps Timeline

Mar 24, 2025

Track your location without giving up on your privacy

In search of a new search

Aug 12, 2024

How to tackle Google's monopoly while making search better for everyone

Where's my time again?

May 31, 2024

Python, datetime, timezones and messy migrations

Some progress on the state of speech detection in Platypush (powered by Picovoice)

Apr 07, 2024

Many ambitious voice projects have gone bust in the past couple of years, but one seems to be more promising than it was a while ago.

ChatGPT, Bard, and the battle to become the "everything app"

Feb 07, 2023

AI-powered search assistants are crucial to ensure that the Web starts and ends on your platform

Web 3.0 and the undeliverable promise of decentralization

Jan 17, 2022

Why the crypto-based Web 3.0 can't deliver on its promises of greater data control, decentralization and scalability at the same time